Table of Contents

Enterprises need to know exactly what their systems detect, and that definition must stay consistent over time. Writing a definition precise enough to settle every hard case has long been impractical because human annotators cannot hold a document that detailed in working memory. In our research paper, Single-Source Safety Definitions, we replace the human interpreter with AI and show that LLMs can hold, apply, and maintain specifications far longer and more precise than any annotator can, making the definition itself the single source of truth for classification, labeling, retraining, and customer-facing explanations. For our Cisco AI Defense product portfolio, we are moving our full safety taxonomy to this AI-first model. We also extend this approach beyond safety classifications, as shown in Defining Model Provenance: A Constitution for AI Supply Chain Safety and Security.

Cisco’s Integrated AI Security and Safety Framework organizes the threats enterprises face when deploying artificial intelligence (AI): harmful content, goal hijacking, data privacy violations, action-space exploits, and persistence attacks. Each top-level threat breaks down into techniques, and every technique needs a definition precise enough that a classifier, an annotator, a customer, and a compliance reviewer reach the same decision on the same input. Existing taxonomies, ours among them, have not yet produced such a definition for a large share of these techniques (harassment, hate speech, jailbreak, and others), and the honest description of how they get decided in practice comes from Justice Potter Stewart’s concurrence in Jacobellis v. Ohio, 378 U.S. 184 (1964): I know it when I see it. A judge can rule one case at a time, but a guardrail flagging thousands of conversations an hour cannot debate each borderline case or wait for social consensus. Without a written specification, we cannot measure performance, explain a flag to a customer, or guarantee the same case is decided the same way from one month to the next.

Annotation science recognizes two paths (Röttger et al., 2022). The descriptive path accepts that reasonable people disagree and treats the variation as signal, which scales with humans but produces no stable specification. The prescriptive path writes rules detailed enough that different readers converge, but until recently it was impractical: adjudicating the long tail of edge cases outruns any team’s capacity, and the resulting document overflows what an annotator can hold in working memory. Frontier large language models (LLMs) change the economics by re-reading a 300-line specification on every classification and scaling adjudication to production volumes, and when two models from different vendors disagree under the same specification, the disagreement locates the sentence that is still ambiguous and lets us validate through a targeted patch rather than an open debate.

A single source of truth, driven end to end by AI

Anthropic’s Constitutional AI showed that a natural-language document can work as an executable specification, and their Constitutional Classifiers extended the idea to safety filtering by distilling a constitution into synthetic training data for a fine-tuned classifier. We extend the term to a per-technique operational specification: one 300+ line document for every technique in the Cisco AI Security and Safety Framework, with required elements, a decision flowchart, boundary rulings against adjacent techniques, worked examples, and accumulated edge-case decisions. We treat it as the single source of truth that every downstream process adjudicates against, including runtime classification (the LLM reads the full document on every call), synthetic-data generation for retraining, labeling guidelines, customer-facing documentation, and compliance mappings.

In our workflow the human role reduces to one question, what should this technique mean, answered by a subject-matter expert who sets the intent and scope and then delegates everything else to AI. AI drafts the constitution from the taxonomy source, labels production conversations, diagnoses where frontier models disagree, proposes patches to the responsible sections, and audits across constitutions for contradictions and gaps. The expert reviews patches and accepts, modifies, or rejects them, without hand-labeling conversations or holding the full document in memory.

We also introduce a dual-axis formulation that earlier safety classifiers do not produce. Intent captures whether the user tried to cause harm through this technique. Content captures whether harmful material for this technique appeared in the conversation. Intent without content means the model was probed and refused. Content without intent exposes model misbehavior on a benign request. Both positive marks a guardrail failure, and both negative covers clean conversations, including discussions about a topic. We score both axes over the full conversation, since multi-turn attacks build intent gradually.

Are LLMs actually better evaluators?

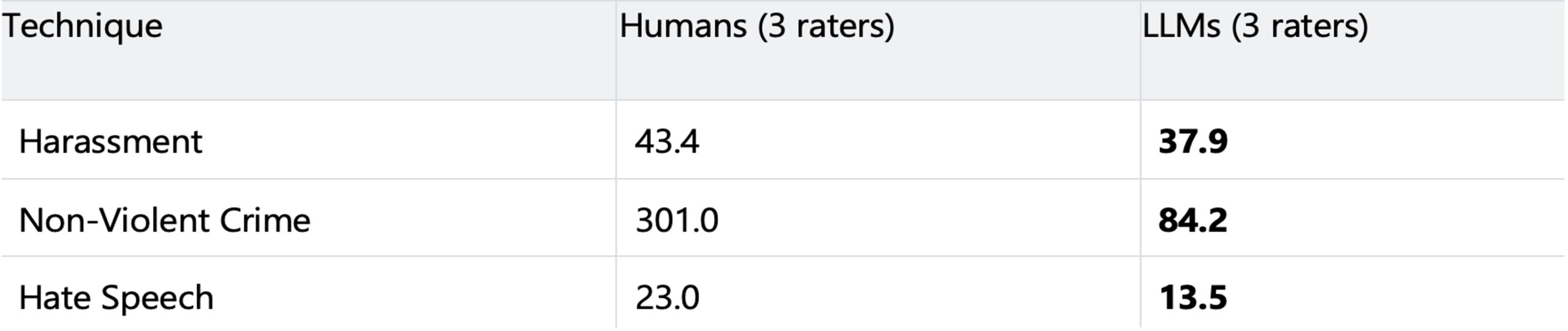

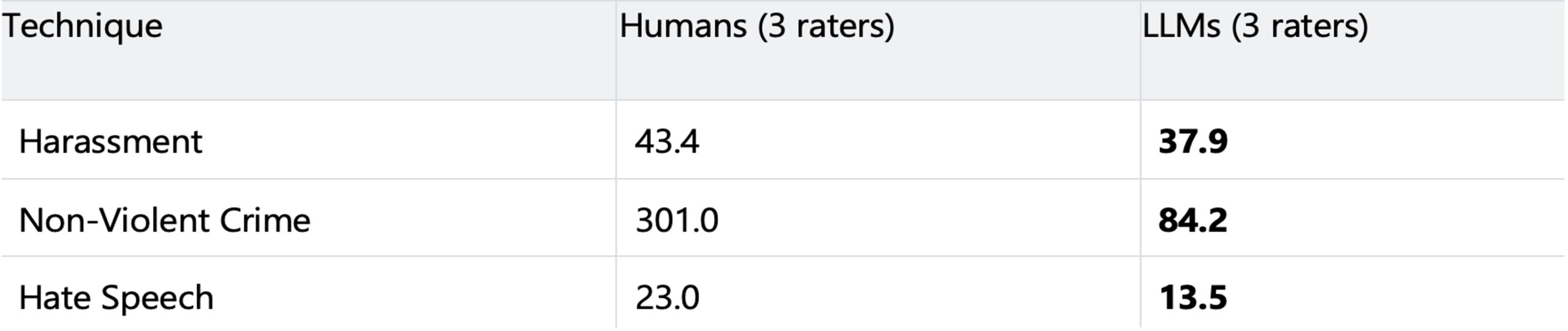

We evaluated three techniques (Harassment, Non-Violent Crime, Hate Speech) using six LLMs from three vendors. On WildChat conversations, two frontier LLMs reading a paragraph-level definition disagree on up to 66 conversations per 1,000; under the constitution, that falls below 3 per 1,000, a reduction of up to 57x. On HarmBench, three frontier LLMs reading a constitution reach unanimous intent labels more often than three humans reading the same document.

Non-unanimous cases per 1,000 conversations on HarmBench (lower is better). LLM raters: GPT-5.4, Opus 4.6, Gemini 3.1, each reading the same constitution the humans received.

We traced the human failures to two causes. A 300+ line document exceeds working memory, so annotators compress the written rules into remembered heuristics and fall back on intuition. They also collapse multi-technique taxonomies into single-label triage, filing a conversation under one sibling technique instead of evaluating each constitution independently. LLMs avoid both failures by re-reading the full document every call and judging each technique in isolation. Their remaining failures misapply decision logic in ways we can trace to specific sections, while human failures silently skip the rules. We expect the gap to widen: constitutions grow as new edge cases accumulate, human working memory stays fixed, and model instruction following, context length, and reasoning all keep improving.

Residual disagreement between frontier models stops being noise to vote away. Each remaining case points to a specific sentence that is ambiguous or incomplete, and our refinement loop converts that sentence into an explicit ruling.

What this means for Cisco AI Defense customers

Customers care less about a research number than about seeing why the system made a given decision. Every flag traces to a specific rule in a readable document: the classifier cites the rule it applied, the elements it found, and the boundary notes it checked, and when we do not flag, the same document explains why the case fell outside the line. In the near future customers will be able to query this specification directly through an AI assistant, without needing to be experts in a category , and get a plain-language answer grounded in the text. The same document drives retraining, labeling, product, legal, and go-to-market, so a wording change spreads everywhere from one source. AI-first is not a slogan but a concrete shift in how we build these systems, faster, simpler, and more accurate internally and for our customers.

Read the full research paper: Improving Labeling Consistency with Detailed Constitutional Definitions and AI-Driven Evaluation.